10 Best Data Curation Tools for Computer Vision [2026]

Table of contents

A practical guide to the best data curation tools for machine learning in 2026, covering LightlyStudio, Encord, Labelbox, SuperAnnotate, Clarifai, Cleanlab, and Labellerr. Includes key evaluation criteria, the 12 main steps of the data curation process, best practices, common challenges, and how data curation differs from data cleaning and data management. Aimed at ML teams looking to improve dataset quality and reduce labeling costs.

Effective data curation is now a foundational requirement for building reliable machine learning models. Rather than collecting ever-larger datasets, teams are prioritizing smarter selection, cleaner labels, and tighter feedback loops between data and model performance. Here are the best data curation tools for machine learning in 2026 and how to choose the right one.

In the era of big data and artificial intelligence, effective data curation has become essential. Rather than simply collecting large volumes of data, teams now focus on organizing and transforming raw information into a refined, reliable resource that drives informed decision-making.

Data curation is especially critical in machine learning, ensuring training data used for model development is accurate, relevant, and consistent. Without proper data curation, models risk underperforming or producing biased results. Well-curated data can significantly enhance model performance, reduce errors, and save valuable time.

Data curation transforms raw and error-ridden data into valuable structured assets. It helps organizations maintain high-quality data standards and unlock the full potential of their data assets for analysis and AI initiatives throughout the data lifecycle. Effective data curation tools are broadly categorized into AI-powered training data curation and enterprise data governance and cataloging.

In this article, we cover the top data curation tools available in 2026, highlight key features to look for, and outline the main steps of the data curation process.

See Lightly in Action

Curate and label data, fine-tune foundation models — all in one platform.

Book a Demo

What to Look for in a Data Curation Tool

Choosing the best data curation tools requires evaluating several key factors.

Data Prioritization

The tool should automate data prioritization — filtering, sorting, and selecting the most valuable data from large datasets to reduce manual effort and focus labeling on the samples most likely to improve model accuracy.

Visualizations

Look for customizable visualization tools — tables, plots, image grids — that help teams analyze data distributions and identify biases, outliers, and edge cases. Good data visualization is essential for smart analysis.

Model-Assisted Insights

The tool should connect model performance back to the dataset. Features like confusion matrices help surface data quality issues such as false positives and negatives, enabling targeted corrections.

Modality and Format Support

Ensure the tool handles multiple data types — images, video, text, medical imaging — and supports annotation formats like bounding boxes, semantic segmentation masks, polylines, and keypoints.

User-Friendly Interface

A user friendly interface that serves both data scientists and non-technical annotators reduces friction. Support for automated processes and seamless integration with existing workflows is equally important.

Annotation and Collaboration

Look for annotation workflows with version control, quality assurance checks, and support for multiple users working simultaneously on shared machine learning datasets.

Pipeline and Data Management

The best data curation tools integrate into the machine learning pipeline, connect to cloud storage, handle large datasets efficiently, and support import and export across common file formats and digital formats.

What Are the Main Steps of the Data Curation Process?

The data curation process converts raw data collected from various sources into a reliable resource for machine learning. Curation involves transforming collections of data into collections of datasets through disciplined stages.

1. Data Collection

The first step in the data curation process is data collection, which involves gathering data from databases, websites, sensors, and social media. Identifying and collecting relevant data is critical for downstream model performance.

2. Data Cleaning

Data cleaning is the second step in the data curation process, ensuring quality and accuracy by handling missing values, removing duplicates, correcting inconsistencies, and filtering outliers. Data preparation and cleaning involves removing duplicates and fixing inconsistencies to ensure accuracy.

3. Data Annotation

Data annotation is a step in the data curation process that involves adding labels to data, which is crucial for supervised learning tasks. Annotation quality directly determines what computer vision models and NLP models can learn.

4. Data Transformation

Data transformation prepares cleaned and annotated data into a format suitable for machine learning algorithms — normalization, one-hot encoding, resizing, or converting between annotation formats.

5. Data Integration

Data integration combines data from multiple sources into a unified view through record linkage and schema mapping. Managing large-scale data requires automated platforms capable of handling high volume and complexity.

6. Data Enrichment

Data enrichment adds relevant context to existing data — metadata tags, geolocation, timestamps — improving the accuracy of predictive models. Data preparation includes cleaning and standardizing data into a usable format, flagging errors, and adding metadata.

7. Metadata Management

Metadata management organizes detailed metadata about the data itself: storage location, file formats, collection method, data lineage, and quality indicators. Good metadata management makes datasets findable, accessible, interoperable, and reusable (FAIR).

8. Data Validation

Data validation implements systems to monitor accuracy, completeness, and consistency — a core part of quality assessment in any data curation workflow.

9. Data Privacy Enforcement

Protecting sensitive data means enforcing access controls, using encryption, and following regulations such as GDPR. Implementing strong data governance policies is a best practice for protecting data privacy during curation.

10. Data Lineage Establishment

Data lineage tracks origin, transformations, and dependencies throughout the data lifecycle. Data lineage establishment is crucial for maintaining data quality and traceability.

11. Data Maintenance

Data maintenance ensures datasets remain relevant and valuable for ongoing machine learning tasks as the world changes and new edge cases emerge.

12. Regular Monitoring

Regular monitoring of the data curation process is essential to identify and resolve issues, including configuring metrics to measure data accuracy.

Top Data Curation Tools (2026)

The following section reviews the leading curation tools available as of April 2026, verified against current documentation and public sources.

1. LightlyStudio

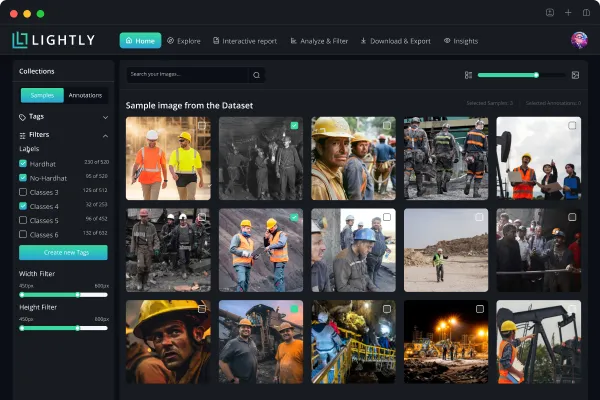

LightlyStudio is an open-source data curation and labeling platform for computer vision workflows, built by Lightly AI (a spin-off from ETH Zurich). Released in March 2026, it specializes in managing large image datasets using self-supervised learning, filtering out redundant data and selecting the most valuable data for labeling.

LightlyStudio is built around embedding-based data selection. Samples are indexed as vector representations, enabling similarity search, near-duplicate detection, and clustering across large datasets. Active learning surfaces the most informative unlabeled samples, directly improving model accuracy while reducing labeling costs. Built-in annotation and QA tools are integrated into the same platform, keeping the data curation workflow in one place.

The platform uses a DuckDB backend with performance-critical components in Rust for fast interaction on large image datasets. A Python-first SDK (pip-installable) makes it easy to integrate into any machine learning pipeline. It is free to use locally and supports on-premises deployment for enterprise data handling requirements.

Key Features

- Embedding-based curation: automatic sample selection, near-duplicate detection, active learning

- Similarity search and data exploration across large datasets

- Built-in annotation and quality assurance for images and video

- On-premises and hybrid deployment; enterprise user management

- Open-source Python library (Apache-2), pip-installable SDK

- Plugin system for extending existing workflows

- Migration support from Encord, V7 Labs, Roboflow, and others

Limitations

LightlyStudio launched in March 2026 and has a shorter track record than established platforms. A hosted cloud version was announced but was not yet generally available at time of writing.

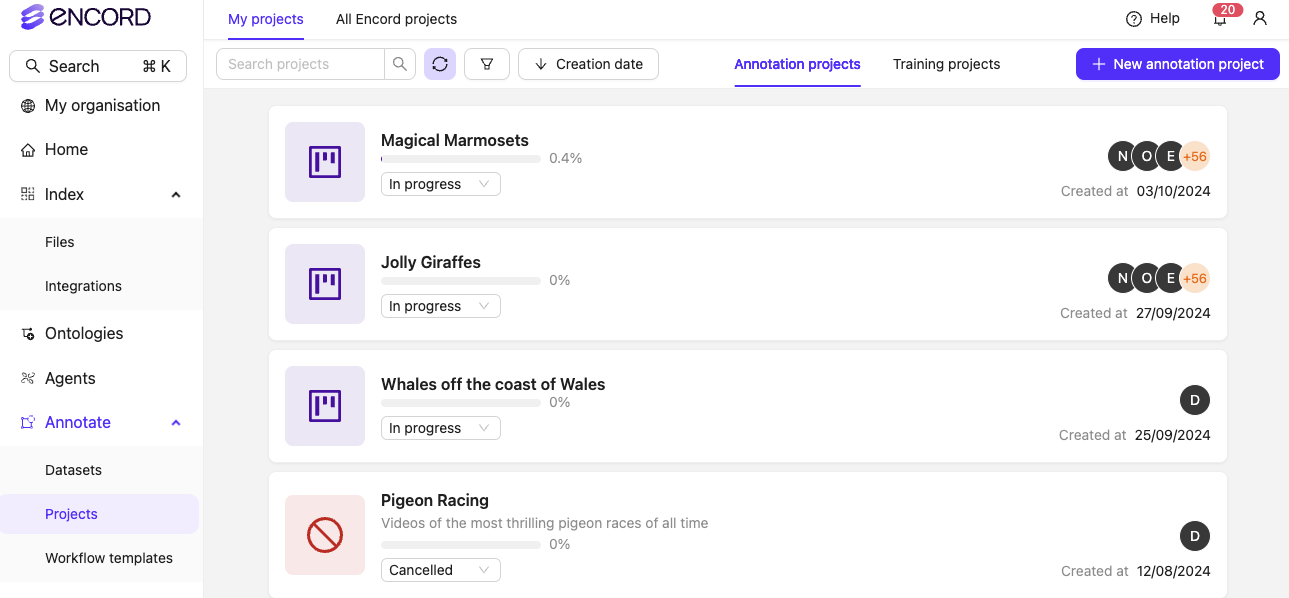

2. Encord

Encord is a unified, multimodal data platform designed for scalable annotation, curation, and model evaluation. Encord Index is an end-to-end data management and curation tool that allows teams to visualize, search, sort, and control their datasets, streamlining the curation process and improving model quality. It supports images, video, text, audio, and DICOM data types.

In February 2026, Encord raised a $60M Series C led by Wellington Management, bringing total funding to $110M. The company reports managed data volume growing from one to five petabytes over the prior year. Encord Index supports complex data types and is SOC 2, HIPAA, and GDPR compliant.

Key Features

- Multimodal support: images, video, audio, DICOM, LiDAR, text

- AI-assisted annotation with SAM-2, GPT-4o, and Whisper

- Natural language search and similarity search

- Model evaluation and data visualization tools

- SOC 2, HIPAA, and GDPR compliance

- Seamless integration with AWS, Azure, and GCP

Limitations

Pricing is enterprise-focused and not publicly listed. Teams outside computer vision and physical AI use cases may find limited fit.

3. Labelbox

Labelbox is a leading data curation platform that enhances the training data iteration loop, helping teams improve their machine-learning models by efficiently managing and refining their datasets. It now covers both computer vision and generative AI training data, including RLHF and supervised fine-tuning for large language models.

Its managed labeling service (Alignerr) provides on-demand expert annotators. Recent updates include a redesigned workflow builder, multi-modal chat labeling with audio and video support, per-prompt rubric scoring, and annotator performance analytics.

Key Features

- AI-assisted and model-in-the-loop labeling

- Managed labeling services via the Alignerr community

- Multi-modal annotation: images, video, audio, documents

- Configurable workflow builder with consensus scoring

- Python SDK; seamless integration with ML frameworks

- Free tier available; Starter at $0.10 per LBU

Limitations

Labelbox's pivot toward generative AI has shifted some focus away from specialized computer vision curation. Costs scale quickly at enterprise volume.

4. SuperAnnotate

SuperAnnotate is a comprehensive data curation platform that offers automated annotation features powered by AI to accelerate labeling tasks while maintaining high accuracy through human-in-the-loop validation. It supports images, video, text, and audio, covering the full annotation workflows from raw data to training datasets.

The company raised a $50M Series B in 2025 (backed by Dell Technologies Capital, NVIDIA, and Databricks) and was included in the NVIDIA Enterprise AI Factory validated design. SuperAnnotate supports on-premises, hybrid, and cloud deployments.

Key Features

- AI-assisted pre-labeling and human-in-the-loop validation

- Automated quality checks and inter-annotator agreement scoring

- Advanced annotation: semantic segmentation, video tracking, 3D point clouds

- Real-time project dashboards and annotation support

- Integrations with AWS, Azure, GCP, Snowflake, Databricks

Limitations

SuperAnnotate is annotation-first and offers less embedding-based curation than dedicated curation tools. Its free tier is limited to early-stage startups.

5. Clarifai

Clarifai is an enterprise-grade platform designed to help organizations prepare, label, and manage training data for AI models, offering an end-to-end solution for data labeling across multiple modalities. Founded in 2013, it reports over 400,000 users across 170+ countries and supports natural language processing, computer vision, and audio use cases.

Clarifai's Scribe Label product provides AI-assisted annotation, auto-annotation, and vector search for dataset management. The broader platform covers model training, inference, and deployment across cloud, on-premises, and hybrid environments.

Key Features

- End-to-end: annotation, model training, inference, and deployment

- AI-assisted and auto-annotation (Scribe Label)

- Vector search for dataset indexing; comprehensive platform for the full AI lifecycle

- Multi-modal: images, video, text, audio

- Flexible deployment: cloud, on-premises, bare metal, hybrid

- Large pre-trained model marketplace

Limitations

Pricing is considered expensive by smaller teams. Documentation quality is flagged as inconsistent in some user reviews.

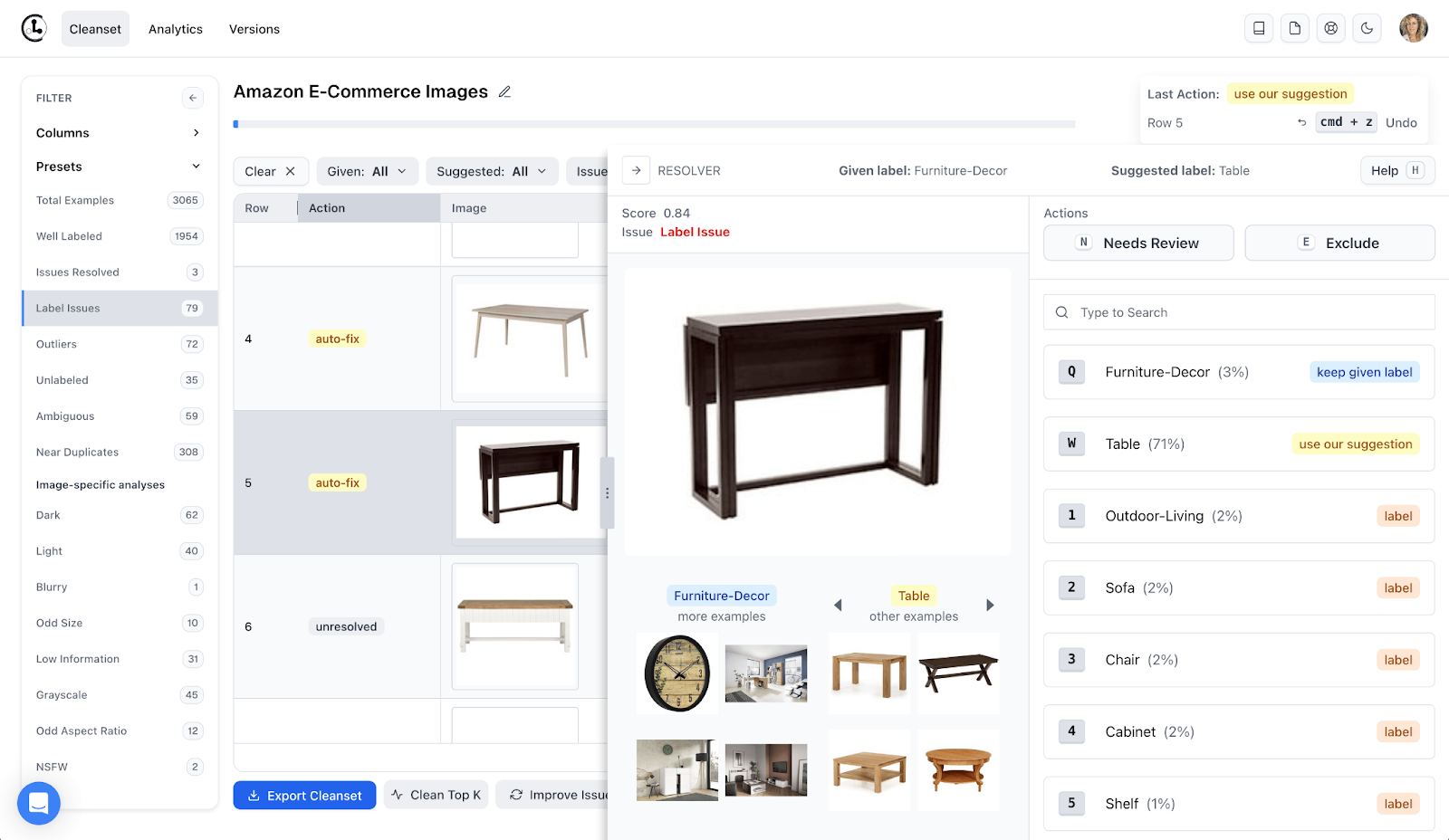

6. Cleanlab

Cleanlab uses confident learning algorithms to diagnose and fix label errors and data quality issues automatically. Rather than providing an annotation interface, it focuses on finding and correcting problems in existing labeled datasets — detecting label errors, near-duplicates, and missing values without requiring manual review.

The open-source Python library works with any model framework — PyTorch, Hugging Face, scikit-learn, XGBoost — and supports classification, regression, object detection, and annotation support for multi-annotator tasks. Modern platforms use AI to automatically detect data quality issues, while traditional tools rely on manual rules; Cleanlab exemplifies the modern approach.

Key Features

- Confident learning for automated label error detection

- Class-level quality assessment and confusion matrix analysis

- Automated label correction and removal recommendations

- Open source Python library; works with any programming language ecosystem

- Supports advanced algorithms for multi-annotator quality scoring

- Commercial platform extends to LLM and AI agent reliability

Limitations

Cleanlab requires a labeled dataset as input — it is not a full data management or annotation platform.

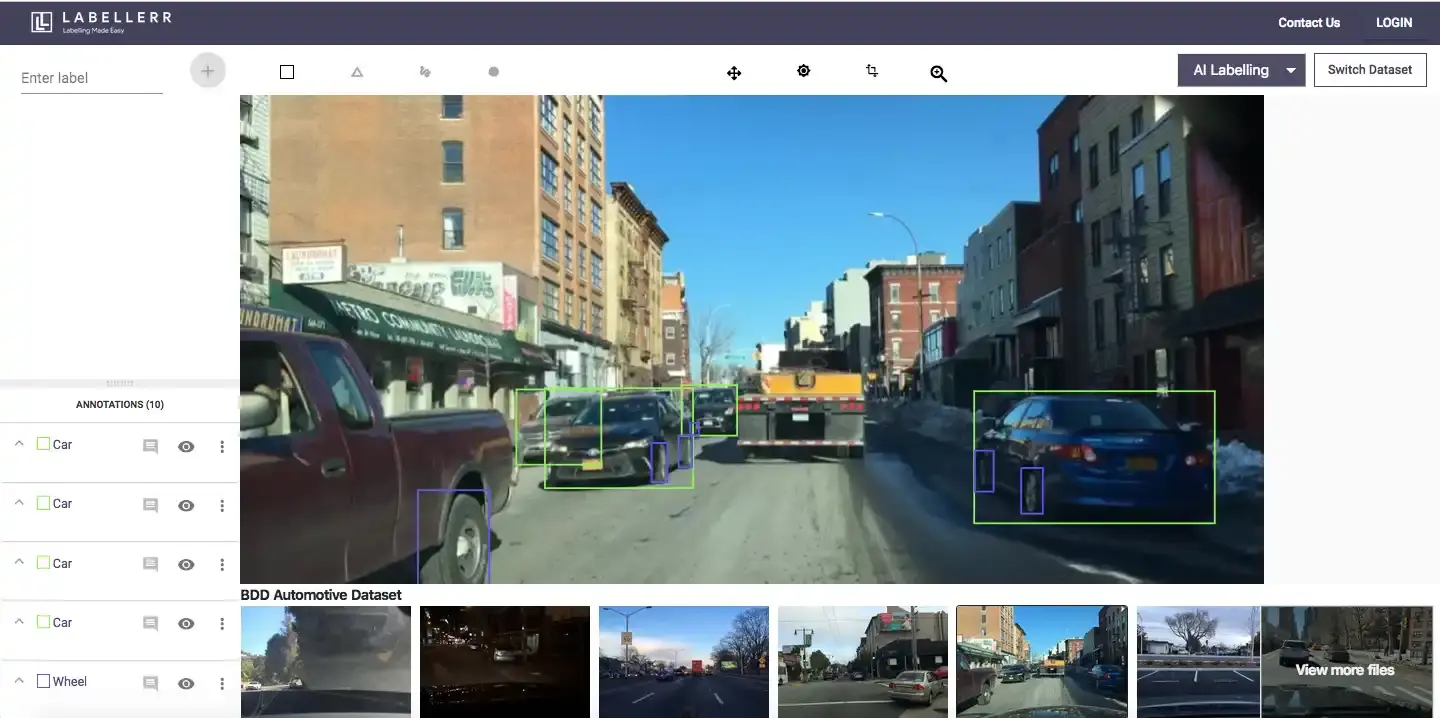

7. Labellerr

Labellerr is an AI-assisted data labeling and curation platform with strong automation capabilities. It offers prompt-based and model-assisted labeling, automated QA with feedback loops, and synthetic data generation to reduce dependence on manually collected data. It integrates with GCP Vertex AI and AWS SageMaker for seamless integration into MLOps pipelines.

Key Features

- Prompt-based and model-assisted labeling

- Automated QA, version control, and guideline management

- Synthetic dataset generation

- Real-time analytics dashboards

- GCP Vertex AI and AWS SageMaker integration; supports multiple users

Limitations

Smaller platform with less public information on enterprise compliance certifications compared to larger competitors.

Data Curation vs. Data Cleaning vs. Data Management

These terms describe different scopes of the same problem.

Data curation is the broadest process — selecting, organizing, annotating, and maintaining data for a specific purpose, including data cleaning and data management as sub-processes. Data curation adds value by enriching data with metadata, ensuring quality, and making it FAIR.

Data cleaning is a focused activity within curation: removing errors, handling missing values, and eliminating duplicate records to improve data integrity.

Data management covers data organization across the full lifecycle — how data is stored, versioned, accessed, and governed. Business intelligence and customer data workflows both depend on sound data management practices.

Understanding which is the actual bottleneck helps teams identify which curation tools will have the most impact.

Data Curation Best Practices

Prioritize data quality over quantity. Data curation tools transform raw data into high-quality, actionable, AI-ready assets. More data is not always better — smaller, well-curated training datasets routinely outperform larger ones with systematic label errors.

Automate to reduce inconsistency. Modern data curation often relies on automated tools to manage high volumes of data. Deduplication, format validation, and pre-labeling are the best candidates for automation.

Maintain detailed metadata. Metadata management ensures datasets remain findable and auditable. Data enrichment — adding relevant context to existing data — improves the accuracy of predictive models over time.

Build curation into the model loop. Regular monitoring of the data curation process is essential to identify and resolve issues. Connecting model accuracy evaluations back to the dataset is a hallmark of mature machine learning workflows.

Enforce data governance. Implementing strong data governance policies — encryption, access controls, and audit trails for sensitive data — is essential for maintaining data integrity and complying with regulations.

Challenges in Data Curation

Heterogeneous data. Data curation faces challenges such as managing heterogeneous datasets, which require integration and standardization from various formats and sources, often leading to errors and inconsistencies.

Privacy and compliance. Balancing privacy and accessibility is a significant challenge in data curation, as adhering to regulations like GDPR can compromise data utility and add complexity.

Scale and compute. Ingesting and processing large-scale data volumes is complex and requires significant computational power and storage, which can introduce cost and latency issues.

Data quality issues. Errors like incomplete records and missing data points can hinder analysis and modeling. Data quality issues compound quickly in large datasets without systematic validation.

MLOps integration. Data curation tools often require extensive manual integration work to fit specific MLOps stacks, making the selection process tedious and challenging.

Conclusion: Choosing the Best Data Curation Tools

Conclusion data curation: the right tools depend on your data types, team size, compliance requirements, and where in the machine learning pipeline you need the most support.

For computer vision teams wanting open-source, developer-first curation with built-in labeling, LightlyStudio is a strong choice — free to run locally, with on-premises enterprise options. For large-scale multimodal annotation with enterprise compliance, Encord and Labelbox are the most mature options. For diagnosing label errors in existing machine learning datasets, Cleanlab's open-source Python library is hard to beat. SuperAnnotate and Clarifai serve teams wanting a comprehensive platform from annotation through deployment.

Data curation ensures data remains accurate, accessible, and valuable for analysis and AI initiatives throughout its lifecycle. Investing in the right curation tools early pays compounding returns as datasets and teams grow.

Stay ahead in computer vision

Get exclusive insights, tips, and updates from the Lightly.ai team.

.png)

.png)