Top 50+ Real-World Computer Vision Applications Across Industries

Table of contents

A comprehensive 2026 guide to 50+ real-world computer vision applications across 11 industries — healthcare, biotech, automotive, retail, security, agriculture, manufacturing, smart cities, sports, finance, and geospatial/construction. Includes core CV tasks and techniques, tools for building production models, future trends like multimodal AI and edge inference, and the regulatory landscape. Aimed at ML engineers, product leaders, and industry practitioners looking to understand where computer vision is being deployed today.

Computer vision has moved from research labs into production across every major industry — powered by deep learning advances, cheaper compute, and mature open-source frameworks. From FDA-cleared medical imaging to driverless robotaxis to factory defect inspection, here are 50+ real-world computer vision applications shaping industry in 2026.

1. Introduction

Computer vision technology has improved in accuracy and performance due to advances in deep learning, making it more affordable and accessible for developers and companies of all sizes. What once required expensive custom hardware and large specialist teams can now be implemented with open-source frameworks, commodity GPUs, and pretrained computer vision models.

Computer vision relies on machine learning to process visual data, enabling systems to learn from large datasets and improve over time. Deep learning algorithms, particularly convolutional neural networks (CNNs), are critical to powering computer vision systems by identifying patterns in visual data. These advances have opened up real world applications in industries that were previously out of reach.

Computer vision applications can enhance operational efficiency and reduce costs in industries like manufacturing and retail by automating tasks that were previously labor-intensive. At the same time, organizations face challenges in cross-organizational readiness and ethical considerations when implementing computer vision technologies. Challenges such as visual data integrity, dimensional complexity, and data labeling variability must be addressed to achieve successful outcomes.

Real limitations persist. Building representative training data remains the primary bottleneck for most teams. Human error in labeling workflows degrades model quality. And regulatory scrutiny is increasing, particularly for high-stakes applications in healthcare, automotive, and security.

2. Core Computer Vision Tasks and Techniques

Most real world applications of computer vision combine one or more of these foundational techniques:

Object detection identifies and locates objects within digital images or video using bounding boxes. It is the starting point for most computer vision projects — detecting vehicles, defects, pedestrians, or surgical instruments.

Object tracking extends detection across video frames, following identified objects as they move. Object tracking enables player tracking in sports, vehicle tracking in traffic management, and animal monitoring in agriculture.

Image recognition and object recognition assign labels to images or regions. Machine learning models trained on large labeled datasets learn to recognize objects and identify patterns across a huge variety of visual inputs. Computer vision applications can achieve accuracy rates of up to 99% in recognizing and responding to visual input, comparable to human vision.

Image segmentation separates detected objects at the pixel level, important for medical imaging analysis, robotic picking, and agricultural plant counting.

Pose estimation identifies the position of key points on a body to support patient rehabilitation, sports biomechanics, and worker safety assessment.

Optical character recognition (OCR) extracts text from digital images and video, underpinning license plate recognition, document processing, and pharmaceutical inspection.

Pattern recognition analyzes visual data to identify recurring structures, textures, or anomalies — central to defect detection in manufacturing and image processing tasks in medical diagnostics.

Convolutional neural networks (CNNs) are the dominant architecture for most image recognition and object detection work. These deep learning algorithms identify patterns by learning hierarchical visual features from large volumes of training data, substantially outperforming older rule-based computer vision techniques. Computer vision systems can achieve more than 95% accuracy in detecting defects in manufacturing processes, significantly improving quality control compared to manual inspections.

3. Applications of Computer Vision Across Industries

3.1 Computer Vision Applications in Healthcare and Medical Imaging

Computer vision enables machines to interpret visual data in real-time, powering applications like automated diagnostics, surgical assistance, and patient monitoring. By mid-2025, the FDA had cleared approximately 873 AI/ML-enabled medical devices, with radiology accounting for the largest share. AI models assist radiologists by flagging anomalies in medical images, often detecting issues earlier than the human eye.

1. Medical imaging analysis. Deep learning algorithms assist in interpreting X-rays, CT scans, magnetic resonance imaging (MRI), and pathology slides. Algorithms analyze X-rays, CT scans, and MRIs to detect anomalies like tumors, often with greater accuracy than human radiologists on specific narrow tasks.

2. Cancer detection. Machine learning models detect subtle differences between cancerous and non-cancerous tissue in MRI scans, CT images, and histopathology slides, supporting early diagnosis and reducing missed findings in high-volume screening programs.

3. Diabetic retinopathy and disease screening. Automated analysis of retinal photographs identifies disease signs in mass screening settings without requiring an on-site specialist. Published evaluations report around 87% sensitivity in controlled settings.

4. Surgical tool tracking. Cameras combined with computer vision models detect and track surgical instruments throughout a procedure, reducing the risk of retained instruments and supporting workflow documentation.

5. Patient fall detection and ICU monitoring. Pose estimation applied to ward cameras detects patient falls in real time, alerting caregivers. In intensive care units, continuous posture and movement monitoring flags anomalies during long shifts, helping staff focus on decision making rather than constant manual observation.

6. Rehabilitation and movement analysis. Pose estimation on video of patients performing exercises allows automated assessment of range of motion and gait patterns, supporting home-based rehabilitation programs.

What's new (2025–2026): Multimodal AI models integrating visual data with clinical notes and patient history are entering early commercial use. Federated learning — training models across hospital networks without centralizing patient data — is gaining traction as a privacy-preserving approach to analyze images at scale.

3.2 Computer Vision Projects in Biotech

7. Drug discovery imaging. Computer vision algorithms screen compound libraries by analyzing microscopy images of cells, processing millions of digital images where human review would be impractical, accelerating early-stage drug discovery.

8. Cell counting and classification. Automated cell counting, morphology classification, and detection of abnormal cells from microscopy images are standard tools in research laboratories and increasingly used in clinical pathology.

9. Biomanufacturing inspection. Computer vision technology monitors biopharmaceutical production for contamination and quality deviations — vial integrity, fill levels, and packaging — reducing waste and ensuring compliance. Such systems improve operational efficiency while reducing the human error rate in quality checks.

3.3 Autonomous Vehicles and Advanced Driver Assistance Systems

Computer vision is the primary sensing modality for autonomous vehicles and advanced driver assistance systems, with meaningful variation in approach across companies.

10. Autonomous vehicles. Waymo operates fully driverless commercial robotaxi service in ten US cities as of early 2026, passing 500,000 paid rides per week and reporting 90% fewer serious injury crashes than human drivers across nearly 100 million driverless miles. In autonomous vehicles, computer vision is essential for real-time object recognition to navigate safely. Tesla's camera-only Cybercab began small-scale unsupervised operation in Austin, Texas in January 2026. In China, Baidu's Apollo Go delivered over 14 million rides across 16 cities by mid-2025.

11. Advanced driver assistance systems and object recognition. Computer vision is used to recognize objects — traffic signs, pedestrians, cyclists, and other vehicles — for lane keeping, adaptive cruise control, automatic emergency braking, and blind-spot monitoring. These features are now standard or required equipment in new vehicles across most major markets.

12. Driver monitoring and drowsiness detection. In-cabin cameras using facial landmark detection and gaze estimation track driver alertness. EU regulations now mandate such systems in new passenger vehicles. They detect drowsiness and distraction and trigger alerts to reduce human error behind the wheel.

13. Lane detection and traffic sign recognition. Computer vision identifies lane markings, road edges, traffic signs, and obstacles in real time using image segmentation and object detection — a core component of both ADAS and fully autonomous stacks.

14. Traffic flow analysis. Municipal intelligent systems use computer vision on CCTV infrastructure to analyze traffic flow, measure vehicle counts and speeds, detect incidents, and adjust signal timing. Recent advancements in computer vision have allowed for real-time monitoring and analysis of traffic flow, improving urban traffic management. These are among the most widely deployed computer vision applications in public infrastructure.

15. Vehicle inspection. Automated exterior and underbody inspection systems scan vehicles in roughly 20–30 seconds, generating condition reports for fleet operators, insurers, and car rental companies.

What's new (2025–2026): BYD's God's Eye 5.0 system rolled out across 2.3 million vehicles in China. Wayve demonstrated a mapless, camera-based autonomy stack operating in novel cities, with Nissan planning consumer integration.

3.4 Retail Computer Vision Applications

16. Self-checkout and cashierless stores. Camera-based systems track items customers pick up and charge accounts automatically. Simpler implementations — terminals that identify items placed on a scale — are more widely deployed than full cashierless environments.

17. Shelf stock monitoring. Vision-based systems on robots or fixed cameras monitor shelf stock levels, detect misplaced items, and identify pricing errors, triggering restock alerts before shelves run empty.

18. Customer behavior analysis and heatmapping. In-store cameras analyze customer behavior — traffic patterns, dwell time at fixtures, queue formation — generating heatmaps that help retailers optimize product placement and store layout. Retailers use computer vision apps to analyze customer behavior and understand what visual inputs drive purchasing decisions.

19. Inventory and warehouse automation. In fulfillment centers, vision-guided robots use object tracking to identify packages, read labels, and determine storage locations, enabling flexible picking across varied item shapes and sizes.

20. Loss prevention. Computer vision systems flag behavioral signals associated with theft and alert security staff. Behavioral detection faces fewer regulatory constraints than identity-based facial recognition, which is restricted in several jurisdictions.

3.5 Security and Surveillance Applications of Computer Vision

21. Facial recognition. Used for access control, border management, and law enforcement identification. The EU AI Act prohibits real-time remote facial recognition in public spaces in most circumstances.

22. Suspicious behavior monitoring. AI surveillance systems flag behavioral anomalies — loitering in restricted areas, unusual movement patterns, abandoned objects — relying on object detection and pattern recognition rather than biometric matching.

23. License plate recognition. Object detection combined with optical character recognition reads vehicle plates in real time for toll automation, parking management, fleet tracking, and building access control.

24. Fire and smoke detection. Deep learning algorithms continuously analyze camera feeds to identify early signs of fire or smoke — drifting haze, flickering flames — enabling faster detection than traditional heat sensors in warehouses, factories, and forests.

25. Crowd monitoring and management. Computer vision estimates crowd density, detects surges, and identifies when individuals may have fallen or require assistance, supporting event safety and real-time decision making.

3.6 Agriculture Computer Vision Applications

26. Crop health monitoring and plant disease detection. Multispectral drone and satellite imagery analyzed by machine learning models detects disease, pest damage, and nutrient stress. Deep learning algorithms classify disease type and severity from leaf and canopy images, enabling targeted interventions.

27. Insect detection and pest monitoring. Object detection on high-resolution trap images counts and identifies pest insects at scale, informing spray decisions without broad-spectrum pesticide application.

28. Livestock monitoring. Camera-based vision based systems track animal behavior, posture, and activity to detect lameness, illness, or stress. Individual animal identification using body pattern recognition is commercially available for cattle.

29. Automated harvesting and weeding. Robotic systems guided by computer vision selectively harvest ripe fruit and vegetables. Separate weed detection systems identify individual weed plants among crops, enabling targeted spot-spraying that reduces herbicide use.

30. UAV farmland monitoring and yield prediction. Unmanned aerial vehicles with imaging sensors provide high-resolution field surveys. Computer vision analysis supports crop mapping, growth stage assessment, irrigation management, and pre-harvest yield estimation.

3.7 Manufacturing Computer Vision Applications

Manufacturing is the largest end-user industry for computer vision by revenue. Computer vision applications enhance operational efficiency and reduce costs by automating tasks that were previously labor-intensive. Computer vision systems can achieve more than 95% accuracy in detecting defects in manufacturing processes, significantly improving quality control compared to manual inspections.

31. Quality control and defect detection. Automated visual inspection on assembly lines detects scratches, dimensional deviations, contamination, and packaging errors at speeds manual inspection cannot match. Human error accounts for nearly a quarter of inspection-related issues in factory settings.

32. Surface and assembly line inspection. Computer vision analyzes texture, finish quality, and coating consistency. Vision based systems also monitor assembly lines in real time, flagging misaligned parts, jams, or out-of-sequence steps immediately to maintain consistent quality.

33. Missing item and packaging inspection. Before products are sealed or shipped, vision systems verify all required components are present — every bottle filled, every component installed, every insert included.

34. Predictive maintenance. Cameras and thermal imaging monitor equipment for early-stage anomalies — overheating, leaks, corrosion, and unusual surface patterns on machinery — identifying failure precursors before costly breakdowns occur.

35. PPE compliance and workplace safety. Computer vision monitors whether workers are wearing required personal protective equipment — helmets, vests, glasses, gloves — across factory floors, alerting supervisors to violations or unsafe proximity to hazardous equipment.

36. Vision-guided robotics. Vision-guided robots use object tracking and object recognition to locate and orient parts for assembly, enabling flexible automation on manufacturing processes that handle varying product geometry.

3.8 Smart City Computer Vision Projects

Smart city applications use existing urban camera infrastructure to improve safety and public services. Such systems are among the fastest-growing computer vision projects in the public sector, delivering operational efficiency gains in cities of all sizes.

37. Traffic flow analysis and violations detection. Computer vision on street cameras measures vehicle counts, speeds, and density, enabling adaptive signal control. Vision based systems also detect traffic violations — speeding, red-light running, illegal turns — to support automated enforcement. Computer vision tools used for traffic management can process image and video data from thousands of cameras simultaneously.

38. Smart parking management. Camera systems detect free and occupied spaces in real time, feeding availability data to driver guidance apps and reducing time spent searching for parking in dense urban areas.

39. Graffiti and urban maintenance detection. Computer vision applied to city cameras or drone imagery detects graffiti, potholes, broken signage, and overflowing bins, enabling proactive maintenance scheduling.

40. Infrastructure damage detection. Thermal camera imaging analyzed by computer vision models identifies moisture intrusion, subsurface water leaks, and early-stage structural damage in buildings and public infrastructure, allowing maintenance teams to intervene before damage escalates.

3.9 Object Tracking and Decision Making in Sports

41. Player tracking and performance analytics. Multi-camera systems use object tracking to follow player positions, speeds, and movement patterns throughout matches and training sessions, supporting tactical analysis and injury prevention across professional leagues.

42. Automated refereeing and decision making. Hawk-Eye is used in tennis, cricket, and football for line calls, ball tracking, and goal-line decisions. The Video Assistant Referee (VAR) system uses computer vision to support offside and foul decisions, reducing officiating error and improving the accuracy of decision making.

43. Broadcast enhancements and augmented reality. AI-driven cameras automatically follow the ball or key players. Augmented reality computer vision overlays — first-down lines, pitch speed graphics, live player statistics — are generated in real time using object tracking.

3.10 Finance and Insurance Computer Vision Applications

44. Biometric verification and fraud detection. Face recognition and liveness detection are standard in digital banking KYC workflows. Computer vision technology is being used to automate damage assessment in insurance claims, speeding up the process and reducing human error. Deepfake detection has become a distinct product category as synthetic identity fraud using AI-generated faces has increased.

45. Automated document processing. Optical character recognition combined with image processing tasks and natural language processing extracts structured data from contracts, invoices, and identity documents, reducing human error across financial operations.

46. Claims processing and remote inspection. Computer vision analyzes vehicle damage images to estimate repair costs and flag fraud. CV-equipped drones inspect rooftops and disaster-affected areas without sending adjusters into hazardous conditions.

3.11 Geospatial and Construction Computer Vision Applications

47. Environmental monitoring and topographic mapping. Computer vision techniques process aerial photographs to generate 3D terrain models. Satellite imagery analysis tracks deforestation, water level changes, wildfire progression, and flood extents, supporting climate monitoring and disaster response.

48. Urban planning and infrastructure analysis. Analyzing image and video data from satellites and drones tracks urban growth, land use change, and infrastructure condition at regional scales, informing sustainable city planning.

49. Construction safety and structural inspection. PPE compliance monitoring and hazardous zone intrusion detection are the most widely deployed vision based systems in construction. Computer vision also inspects materials and completed work for deviations from specification — rebar spacing, concrete cracks, weld quality.

50. Crack and structural damage detection. Cameras on drones or inspection robots scan bridges, buildings, and infrastructure for cracks and anomalies at scale, identifying issues that manual inspection misses on complex or elevated surfaces.

4. Building Production Computer Vision Models: Tools for Training Data

A persistent challenge in deploying computer vision is building high-quality training data. Human error in labeling workflows degrades model quality. Naive data collection produces datasets that are unrepresentative or filled with near-duplicate samples that waste labeling budget without improving performance. Implementing computer vision solutions can exacerbate challenges such as visual data integrity, dimensional complexity, and data labeling variability, which organizations must address to achieve successful outcomes.

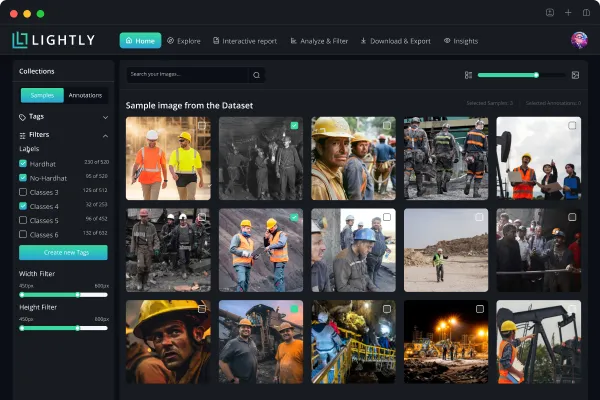

LightlyStudio is an open-source (Apache-2.0) data curation and labeling platform for image and video workflows, released in March 2026 as the successor to LightlyOne. It combines curation, annotation, and embedding-based search into a single platform. Built with Rust for performance and backed by DuckDB for local storage, it runs on Python 3.9–3.14 on Windows, Linux, and macOS without requiring a GPU. Core capabilities include embedding-based data selection that surfaces representative and diverse samples, near-duplicate detection, metadata filtering, built-in annotation tools for detection, segmentation, and keypoint tasks, and active learning loops. A Python-first SDK with Pydantic schemas enables programmatic workflows. YOLO and COCO export formats are supported. Migration from LightlyOne, Encord, Voxel51, Roboflow, and V7Labs is available. Limitation: cloud annotation file imports are limited to specific formats at launch, and the hosted collaborative version is not yet generally available.

LightlyTrain is a framework for self-supervised pretraining, fine-tuning, knowledge distillation, and autolabeling on domain-specific visual data, with no labeled training data required for pretraining. It supports YOLO (v8 and v11), ViTs, RT-DETR, DINOv2, DINOv3, ResNet, Faster R-CNN, and ConvNeXt. A January 2026 update added DINOv3 support, EoMT semantic segmentation, PicoDet models for low-power devices, and ONNX/TensorRT FP16 export for edge deployment. Licensed AGPL-3.0, with commercial licensing available. Limitation: LightlyTrain integrates into existing ML pipelines rather than replacing them.

LightlySSL is Lightly's open-source self-supervised learning research library (MIT license), primarily for academic computer vision projects, and the foundation on which LightlyTrain is built.

These tools are most relevant to teams working on domain-specific applications — manufacturing inspection, agricultural monitoring, medical imaging, autonomous systems — where training data is scarce and labeling cost is a practical constraint.

5. Future Trends in Computer Vision

5.1 Multimodal AI and Image Analysis

Vision-language models that jointly process visual data and text — such as GPT-4o and Gemini — enable natural language querying of image databases and automated report generation from inspection images. These computer vision tools require careful validation before deployment in high-stakes contexts.

5.2 Edge Inference and Intelligent Systems

Edge deployment accounted for nearly half of computer vision deployment share in 2025. Dedicated neural processing units from Qualcomm, NVIDIA, and AMD have substantially increased on-device throughput. NVIDIA's Rubin platform executes YOLOv8 at 240 frames per second under 15 watts — a significant advance for edge-deployed intelligent systems where bandwidth and latency are constraints.

5.3 Advanced 3D Perception, Augmented Reality, and Object Tracking

3D reconstruction is among the fastest-growing segments, projected to grow at around 17% CAGR through 2031. Gaussian splatting has improved 3D reconstruction quality from standard cameras. Augmented reality computer vision applications in manufacturing guidance, surgical assistance, and sports broadcasting are expanding as real-time object tracking and 3D perception mature.

5.4 Self-Supervised Learning and Synthetic Training Data

Self-supervised pretraining reduces the training data needed to achieve strong downstream performance. Separately, generative models produce synthetic digital images for scenarios that are rare, dangerous, or privacy-sensitive — particularly in automotive, medical, and manufacturing computer vision projects. Such systems help teams build robust computer vision models even when real-world labeled data is limited.

5.5 Regulatory Developments

The EU AI Act establishes binding requirements for high-risk computer vision applications. Real-time biometric surveillance in public spaces is prohibited in most circumstances. Medical imaging AI and autonomous vehicles face documentation, auditing, and human oversight requirements. Teams deploying computer vision solutions in regulated sectors need to engage with these requirements during development. Despite the benefits, organizations face challenges in cross-organizational readiness and ethical considerations when implementing computer vision technologies.

6. Conclusion

Computer vision relies on machine learning and deep learning algorithms to process visual data — enabling systems to learn from large datasets, identify patterns, and improve over time. Computer vision technology has improved dramatically in accuracy and performance due to advances in deep learning, making it more affordable and accessible for developers and companies of all sizes.

From medical imaging analysis to autonomous driving, from crop monitoring to infrastructure inspection, computer vision applications now address a wide range of industry needs. The current challenges are practical: building representative training data, adapting computer vision techniques to specific deployment environments, managing labeling cost, and navigating evolving regulatory requirements.

For teams building computer vision projects, the core priorities remain the same across industries: select the right training data, use self-supervised methods to reduce labeling cost, and validate model performance rigorously in the target environment — not just on benchmark datasets.

Last updated April 2026. Market figures are drawn from published analyst reports and reflect estimates that vary across sources.

See Lightly in Action

Curate and label data, fine-tune foundation models — all in one platform.

Book a Demo

Stay ahead in computer vision

Get exclusive insights, tips, and updates from the Lightly.ai team.

.png)

.png)