The Engineer's Guide to Large Vision Models

Table of contents

The Engineer's Guide to Large Vision Models explores the shift from traditional CNNs to massive Transformer-based architectures like ViT, SAM2, and AIMv2. It provides a technical breakdown of how these models leverage self-supervised learning and multimodal fusion to outperform humans in tasks ranging from zero-shot object detection to complex reasoning in Gemini 2.5 Pro. For ML engineers, this guide serves as a roadmap for navigating 2026’s landscape of high-resolution video understanding and efficient domain adaptation.

Short on time? Below is some key information and examples of large vision models.

What are Large Vision Models (LVMs)?

Large Vision Models are advanced deep learning models for computer vision with millions or billions of parameters, trained on vast image datasets to learn intricate visual patterns. They are the visual counterparts to large language models, leveraging Transformer architectures to perform many different vision tasks including image classification, object detection, segmentation, and multimodal reasoning.

How do large vision models work?

Most large vision models use Transformer-based architectures to process visual data instead of traditional convolutional neural networks. A Vision Transformer (ViT), for example, breaks an image into patches and processes them using self-attention mechanisms - similar to how large language models process text tokens. Models like CLIP combine vision and natural language processing by training image and text encoders together to align image and text representations in a shared space. These large vision models work by pretraining on massive datasets, then fine-tuning or prompting for specific tasks.

What are some examples of large vision models?

- Vision Transformer (ViT) - Google's foundational Transformer-based model for image classification.

- CLIP - OpenAI's contrastive model aligning image and text representations for zero-shot classification.

- SAM2 - Meta's Segment Anything Model 2, extending segmentation to video with 6x faster inference.

- Gemini 2.5 Pro - Google's multimodal model processing visual inputs including images, video, and text.

- FLUX.1 / FLUX.2 - Black Forest Labs' open-weight image generation series.

- DINOv2 / DINOv3 - Meta's self-supervised ViT models for various vision tasks.

- AIMv2 - Apple's autoregressive visual model (November 2024), using a novel sequential modeling approach that treats image patches and text tokens as a unified sequence.

- LLaVA-NeXT - Open-source multimodal model supporting 4x higher resolution, competitive with proprietary vision language models on key benchmarks.

What is the difference between VLM and LVM?

Large Vision Models (LVMs) is a broad category covering all large-scale neural networks designed for visual tasks - including vision-only models like ViT and DINOv2. Vision Language Models (VLMs) are a subset of LVMs that combine vision with natural language processing, enabling models to process visual and textual data together. CLIP, LLaVA-NeXT, and Gemini are VLMs; ViT and DINOv2 are LVMs that are not VLMs.

What are LLM examples, the big 4 AI models, and the 4 types of ML models?

Common large language models include GPT-4o (OpenAI), Gemini (Google), Claude (Anthropic), and LLaMA (Meta) - often cited as the leading frontier models. The four main types of machine learning models are: supervised learning, unsupervised learning, semi-supervised learning, and reinforcement learning. Large vision models apply all four paradigms depending on the training objective.

Large Vision Models (LVMs) have transformed how machines interpret visual data. These computer vision models now perform many different vision tasks - from analyzing images for manufacturing defects to enabling autonomous vehicles to recognize pedestrians - with accuracy that rivals human performance on several benchmarks.

In this article, we explore how large vision models work, compare leading architectures, and examine real-world applications. We cover:

- What are Large Vision Models

- Comparison of LVMs

- Architectures of LVMs

- Key Features and Training Strategies

- Fine-tuning and Adaptation

- Real-world Applications

- Limitations and Challenges

- Future Trends

- Conclusion: Large Vision Models

Now, let's dive in.

What are LVMs?

Large Vision Models (LVMs) are deep neural networks designed to process visual information for computer vision tasks including image classification, object detection, segmentation, text-to-image generation, and multimodal reasoning.

Like large language models, LVMs are trained on massive image datasets - often with textual descriptions - allowing them to learn intricate visual patterns and perform various vision tasks: visual search, image captioning, wildlife tracking, photo and video enhancement, and identity verification.

These models typically have millions to billions of parameters, enabling them to capture rich visual features across complex visual data. Unlike traditional convolutional neural networks that require task-specific training, modern large vision models generalize across many different vision tasks through large-scale pretraining.

Large visual models are also designed to bridge the gap between vision and natural language, enabling users to interact with visual data through text queries - a defining feature of today's vision language models.

Comparison of most popular LVMs

With many computer vision models emerging across modalities, comparing architecture, training approach, and capabilities guides model selection for specific applications.

Architectures of LVMs

Large vision models span several architectural families, each suited to different computer vision tasks.

Image Classification and Understanding

Traditional computer vision relied heavily on convolutional neural networks (CNNs) for image processing. Modern large vision models increasingly replace convolutional neural networks with Transformer architectures that scale better to large datasets and generalize across visual tasks more effectively.

ViT (Vision Transformer)

The Vision Transformer (ViT) divides an image into fixed-size patches, flattens and embeds them, and adds positional encodings. The sequence is processed by a Transformer encoder that utilizes self-attention mechanisms to model relationships across the entire image - rather than the local receptive fields used by convolutional neural networks.

ViT achieves top-1 accuracy above 90% on ImageNet when pretrained on JFT-300M (300 million images), surpassing CNN ensembles with a simpler pipeline. Beyond image classification, ViT serves as a feature extractor: producing dense visual embeddings that multimodal systems like LLaVA-NeXT use by projecting image embeddings into the language model's input space for multimodal reasoning.

Multimodal Vision-Language Models

Vision language models process visual and textual data simultaneously, enabling image captioning, visual question answering, and zero-shot classification. They learn to align image and text representations in a shared latent space.

CLIP

CLIP consists of an image encoder and a text encoder, both mapped to a shared latent space using contrastive learning. It maximizes similarity between matching image-text pairs. At inference, CLIP performs zero-shot classification by comparing image embeddings to text descriptions - without task-specific retraining. This makes it highly effective for visual search, content retrieval, and content moderation tasks where users need to query visual data through natural language.

The image encoder processes visual inputs, while the text encoder processes natural language descriptions. Both representations are mapped to a shared latent space.

To train CLIP, the model uses a contrastive learning approach.

Given a batch of image-text pairs, CLIP learns to maximize the similarity between matching pairs while minimizing the similarity between non-matching ones. Specifically, each image and text are projected into a joint embedding space using their respective encoders, and a cosine similarity score is computed. The training objective is to align image and text representations such that correct image-text pairs have the highest similarity scores.

Unlike traditional image classification models that rely on a predefined set of labels, CLIP offers a flexible and dynamic approach to image recognition. At inference time, instead of predicting a class from a fixed set, CLIP can classify images based on arbitrary natural language prompts provided by the user.

This is accomplished by encoding multiple text descriptions (e.g., "a cat," "a dog," "a car") and computing their similarity with the image encoding in a shared embedding space to determine the most relevant match. This open-ended classification capability makes CLIP highly adaptable to various downstream tasks without requiring task-specific retraining. When fine-tuned on previously unseen classes, CLIP leverages its contrastive learning framework to efficiently align textual descriptions with training images in the embedding space.

LLaVA-NeXT

LLaVA-NeXT (January 2024) supports resolutions 4x higher than its predecessor, improving performance on tasks requiring fine-grained visual understanding including OCR, chart analysis, and detailed image captioning. LLaVA-NeXT-34B matches or surpasses Gemini Pro on several visual benchmarks while remaining fully open-source.

The model tiles high-resolution images into sub-images, extracts ViT embeddings per tile, and projects them into the language model's input space. LLaVA-Video (2025) extends this to video data with 7B and 72B parameter models, enabling temporal reasoning across video frames.

AIMv2

AIMv2 (Apple, November 2024) represents a novel sequential modeling approach to visual representation learning. Rather than contrastive training, AIMv2 trains a ViT encoder alongside a causal decoder that predicts image patches and text tokens as a unified sequence - a sequential modeling approach that treats visual data and language as the same prediction problem.

AIMv2-3B achieves 89.5% top-1 ImageNet accuracy with a frozen trunk, outperforming CLIP and DINOv2 on the majority of multimodal benchmarks and on open-vocabulary object detection. The family spans 300M to 2.7B parameters. Its training approach requires no large batch sizes - making it more accessible to scale than contrastive large vision models.

Gemini

Gemini (current: Gemini 2.5 Pro as of April 2026) uses a joint multimodal Transformer trained end-to-end on visual inputs including images, video, and text. Unlike architectures adapting a frozen vision encoder to an existing LLM, Gemini learns cross-modal representations jointly. Gemini 2.5 Pro adds native chain-of-thought reasoning for complex tasks requiring analysis of visual and textual data together.

Image Segmentation and Object Detection

SAM2

Meta's Segment Anything Model 2 (July 2024) is a unified model for image and video segmentation - 6x faster than the original SAM for image processing and requiring 3x fewer user interactions for video segmentation.

SAM2 adds a memory bank for consistent object tracking across video data. Trained on over 1 billion masks including the SA-V video dataset, SAM2 performs real-time object detection and segmentation zero-shot. It is valuable for quality inspection tasks in manufacturing, medical imaging workflows, and analyzing images from autonomous vehicle sensors.

DINOv2 and DINOv3

DINOv2 is a self-supervised ViT that learns visual features without labeled data through student-teacher distillation. It excels at various vision tasks including segmentation, object detection, and dense retrieval where labeled data is limited.

DINOv3 (2025) scales this to a 7B-parameter teacher trained on 1.7 billion images, achieving +6 mIoU improvement on ADE20K semantic segmentation over DINOv2, with Gram anchoring loss and a high-resolution training stage.

Image Generation

Stable Diffusion and FLUX

Stable Diffusion uses a latent diffusion model to generate high-resolution images from text prompts. The process begins with encoding input data into latent space, applying a denoising network conditioned on text to iteratively refine the pixel data representation, and decoding back to the final image. SD3.5 Large (2025) replaces the U-Net with a Diffusion Transformer.

Stable Diffusion excels at photo and video enhancement, game development asset creation, and video editing workflows. FLUX.1 (Black Forest Labs, August 2024) uses a flow matching objective with a Diffusion Transformer. Open-weight variants (Schnell for fast local generation, Dev for research) and FLUX.1 Kontext (May 2025) for image editing make it widely accessible. FLUX.2 [klein] (January 2026) is the fastest current model in the series.

Key Features and Training Strategies for Large Vision Models

Large vision models use several training strategies to learn from massive datasets without requiring full annotation.

Scale: Training large vision models requires significant computational resources and diverse datasets. CLIP trained on 400 million image-text pairs; DINOv2 on 142 million web images; DINOv3 on 1.7 billion images. The model training process at this scale demands thousands of GPU-hours even for fine-tuning.

Self-supervised learning: Models like DINOv2/v3 train without labeled data using student-teacher frameworks. The training process generates its own supervision signal from visual data. Stable Diffusion uses self-supervised denoising over pixel data as its training objective.

Weak supervision: CLIP uses internet-scraped image-text pairs where textual descriptions may not precisely match visual content. LLaVA-NeXT uses GPT-4-generated instruction data as a weakly supervised training signal. Large pretrained models significantly reduce labeled data requirements when fine-tuned on specialized domains - important for applications where annotating visual data at scale is impractical.

LightlyTrain supports scalable model training through self-supervised pretraining, distillation, and autolabeling on domain-specific visual data, supporting YOLO (v8, v11), ViTs, RT-DETR, DINOv3, ResNet, and more.

For teams looking to scale self-supervised learning in real-world applications, Lightly Train offers a powerful, production-ready pipeline. It helps improve model performance using unlabeled data and integrates easily into existing training workflows.

👉 Try it out: Lightly Train.

Fine-Tuning and Adaptation

After pretraining, large vision models adapt to specific tasks through several techniques:

LoRA (Low-Rank Adaptation): Injects trainable low-rank matrices into model weights without modifying pretrained parameters. The training process updates only a small fraction of parameters, preserving general visual features while learning domain-specific patterns. Widely used for adapting Stable Diffusion and FLUX to specific styles or image data domains with minimal computational demands.

Knowledge distillation: Transfers knowledge from a large teacher to a smaller student model using soft probability distributions rather than hard labels, improving generalization. DINOv2 and DINOv3 both use distillation in their model training pipeline.

Prompt engineering: For generative models, prompt engineering shapes visual content output without weight changes - using positive/negative prompts, guidance scale, and iterative refinement to control lighting, composition, and style.

Domain-specific large vision models - such as Landing AI's LandingLens for manufacturing defect detection - combine fine-tuning with few-shot techniques for specialized computer vision tasks like land cover analysis and quality inspection tasks in industrial settings.

Applications and Use Cases of Large Vision Models

Large vision models now underpin applications across many industries.

Image Recognition and Visual Search

Large vision models excel at image classification and visual search at scale. CLIP enables text-driven retrieval across millions of images without manual tags - users can query a database of satellite imagery for "flood-damaged infrastructure" or search product catalogs using photos. DINOv2 embeddings support fine-grained image recognition for species identification, medical anomaly detection, and retail product matching.

Facial recognition systems built on ViT-based large vision models handle identification across varied lighting, age, and expression. These computer vision models require careful evaluation for bias, as large vision models can inherit unfair patterns from training data - particularly significant for facial recognition in sensitive applications.

Object Detection, Segmentation, and Autonomous Vehicles

Object detection and segmentation are critical for autonomous vehicles, which interpret visual cues to recognize pedestrians, other vehicles, road signs, and lane markings for safe navigation. SAM2 and DINOv2 combined enable real-time object detection and tracking in dashcam footage and video data.

SAM2 enables interactive medical imaging workflows - radiologists can refine segmentation of lung nodules or tumors in CT scans in real time, with the model propagating corrections across frames. SAM2 also handles quality inspection tasks: segmenting circuit board components for defect detection, with CLIP classifying defect types from natural language prompts. For aerial and satellite imagery, DINOv2 features support segmentation for land cover analysis, crop boundaries, and infrastructure monitoring.

Autonomous vehicles also benefit from analyzing images across diverse sensor inputs - cameras, LiDAR-fused vision, and dashcams - where large vision models process visual information from multiple viewpoints simultaneously.

Vision-Language Applications

Vision language models enable image captioning, visual question answering, and multimodal reasoning. LLaVA-NeXT can answer questions about visual content - "How many workers are wearing safety gear?" - by interpreting both objects and context. Gemini 2.5 Pro processes complex visual inputs such as charts and documents alongside natural language queries.

CLIP powers content-based visual search: users describe what they're looking for in natural language and process images to retrieve matching visuals from large databases. In retail, this enhances the shopping experience through recommendation systems that process visual information alongside user intent.

Many LVMs are multimodal, meaning they can process visual information and natural language together, allowing them to describe scenes or answer questions about an image - enabling new workflows for accessibility, education, and knowledge work.

Generative and Creative Applications

Stable Diffusion and FLUX.1 support a wide range of content creation tasks: text-to-image generation, photo and video enhancement, video editing, and game development asset creation. FLUX.1 Kontext enables targeted image editing from text instructions. Sora generates coherent video clips from text prompts for use in film pre-visualization and advertising.

Game development pipelines use these large vision models to generate environment textures, character concepts, and UI assets from textual descriptions - accelerating iteration without requiring manual illustration for every variant.

Limitations and Challenges of Large Vision Models

Despite their capabilities, large vision models face significant challenges:

- Computational demands: Training and deploying large vision models requires significant computational resources. Model training costs for the largest models run to millions of dollars, and computational demands for inference remain high at scale.

- Data bias: Large vision models inherit biases from training data, leading to unfair outcomes in applications like facial recognition. Diverse and representative training data is essential to mitigate this, but collecting it is difficult at the scale these models require.

- Interpretability: The complexity of deep neural networks makes it difficult to understand how large vision models process visual information or arrive at outputs - a challenge for safety-critical applications including medical imaging and autonomous vehicles.

- Privacy: Using large vision models in surveillance applications raises significant privacy concerns that must be balanced against technical capabilities.

- Reasoning limitations: Current large vision models struggle with logical inference, commonsense reasoning, and complex tasks requiring contextual understanding across modalities and time.

- Domain adaptation: Despite versatility, most large vision models require fine-tuning or prompt engineering for optimal performance on specialized visual tasks.

Future Trends and Research Directions for Large Vision Models

Several directions are shaping the next generation of large vision models:

Domain-specific foundation models: Models tailored for healthcare (BioViL-T), geospatial analysis, and industrial computer vision tasks will serve as specialized base models, reducing fine-tuning costs for domain-specific applications.

Deeper multimodal fusion: Large vision models are integrating audio, video data, and temporal signals. Qwen2.5-VL (March 2025) handles hour-long videos with event pinpointing - pointing toward large vision models capable of continuous environmental understanding.

Streaming perception for autonomous vehicles and robotics: Future large vision models will process real-time visual inputs continuously, enabling autonomous vehicles and robotic agents to maintain situational awareness through persistent visual understanding. Related work: RT-2, Perceiver IO.

3D vision: Large vision models are expanding into 3D scene understanding - learning from point clouds to reconstruct scenes or generate 3D content. Related work: NeRF, GNT.

Efficient architectures: AIMv2's sequential modeling approach and dynamic resolution models like Qwen2.5-VL point toward training competitive large vision models without massive infrastructure - broadening access to model training at scale.

Conclusion: Large Vision Models

Large vision models have moved from academic research into production workflows across healthcare, manufacturing, content creation, and autonomous vehicles. The shift from convolutional neural networks to Transformer-based architectures - and now toward autoregressive sequential approaches like AIMv2 - has unlocked scale and flexibility that was previously out of reach.

Models like SAM2, DINOv3, LLaVA-NeXT, and FLUX.1 represent the current state of large vision models, each advancing how machines interpret and generate visual content. As computational demands continue to be addressed through more efficient model training processes, large vision models will reach new domains and use cases. For teams building on these models, the key challenges remain data quality, domain adaptation, and responsible deployment at scale.

Lightly in Action: Accelerating Your Large Vision Model Development

Building and deploying large vision models requires high-quality datasets, efficient model training, and optimized workflows. Lightly addresses this at two stages.

LightlyTrain is a framework for self-supervised pretraining, fine-tuning, distillation, and autolabeling on domain-specific visual data. It supports YOLO (v8, v11), ViTs, RT-DETR, DINOv3, ResNet, Faster R-CNN - no labeled data required for pretraining. By leveraging unlabeled image data before fine-tuning, teams reduce labeled data requirements and accelerate deployment. The model training process integrates into existing pipelines with minimal configuration. Available under AGPL-3.0 and commercial licensing.

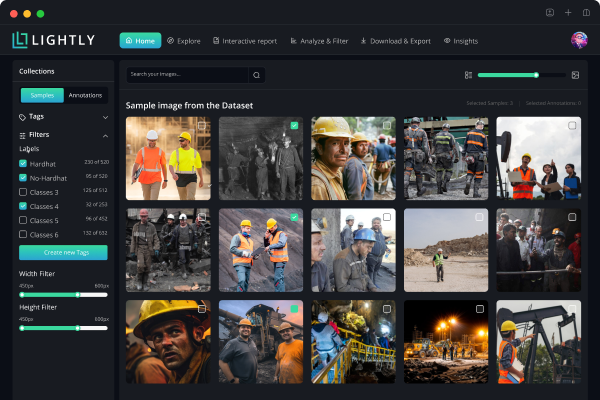

LightlyStudio is a unified data curation and labeling platform for image and video workflows, with on-prem deployment and enterprise controls. Features include embedding-based data selection, active learning, near-duplicate detection, built-in annotation and QA, and a Python-first SDK for integration into training pipelines. Open-source core is free to use locally.

See Lightly in Action

Curate and label data, fine-tune foundation models — all in one platform.

Book a Demo

Stay ahead in computer vision

Get exclusive insights, tips, and updates from the Lightly.ai team.

.png)

.png)

.png)