The 10 Best Voxel51 Alternatives in 2026: A Practical Guide for ML Teams

Table of contents

A practical guide to the ten most credible alternatives to Voxel51's FiftyOne for computer vision and ML teams in 2026. The article breaks down each platform's strengths, weaknesses, and ideal use case — covering open-source tools, enterprise SaaS, and hybrid options. It also includes a comparison table and a decision framework to help teams choose based on bottleneck, deployment constraints, and user roles. Written for ML engineers, data scientists, and technical leads evaluating their data curation and annotation stack.

FiftyOne is a powerful dataset curation and visualization tool, but it's not the right fit for every team. Whether your bottleneck is annotation throughput, enterprise security, model training, or non-technical usability, there are strong alternatives purpose-built for those needs.

Why teams look elsewhere: FiftyOne has no native training layer, enterprise pricing can be steep, and the Python-first UX excludes non-technical labelers and reviewers.

Best all-in-one (curation + annotation + training): Lightly (LightlyStudio + LightlyTrain) is the only platform that adds self-supervised pretraining on top of curation — customers report 50%+ cuts in training cost.

Best enterprise replacement: Encord is the closest head-to-head competitor, with native annotation, broad multimodal support, and SOC2 / HIPAA / GDPR.

Best open-source picks: CVAT for frame-by-frame video and image labeling; Label Studio for multimodal datasets across text, audio, images, and time series.

Best for a specific job: Roboflow for shipping YOLO models fast, V7 for DICOM and medical imaging, Visual Layer for petabyte-scale curation.

The 10 Best Voxel51 Alternatives in 2026: A Practical Guide for ML Teams

Below are the 10 alternatives worth shortlisting in 2026 - what each does, who it fits, and where it falls short.

See Lightly in Action

Curate and label data, fine-tune foundation models — all in one platform.

Book a Demo

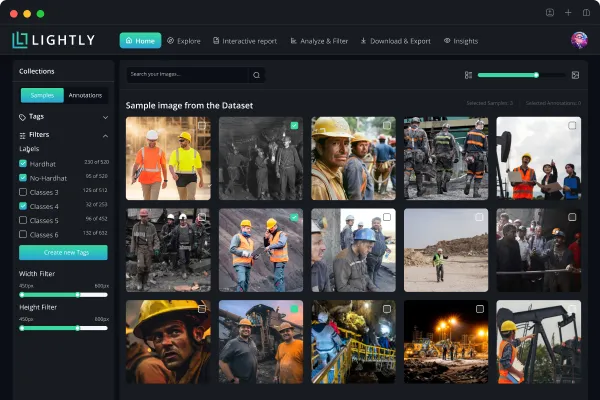

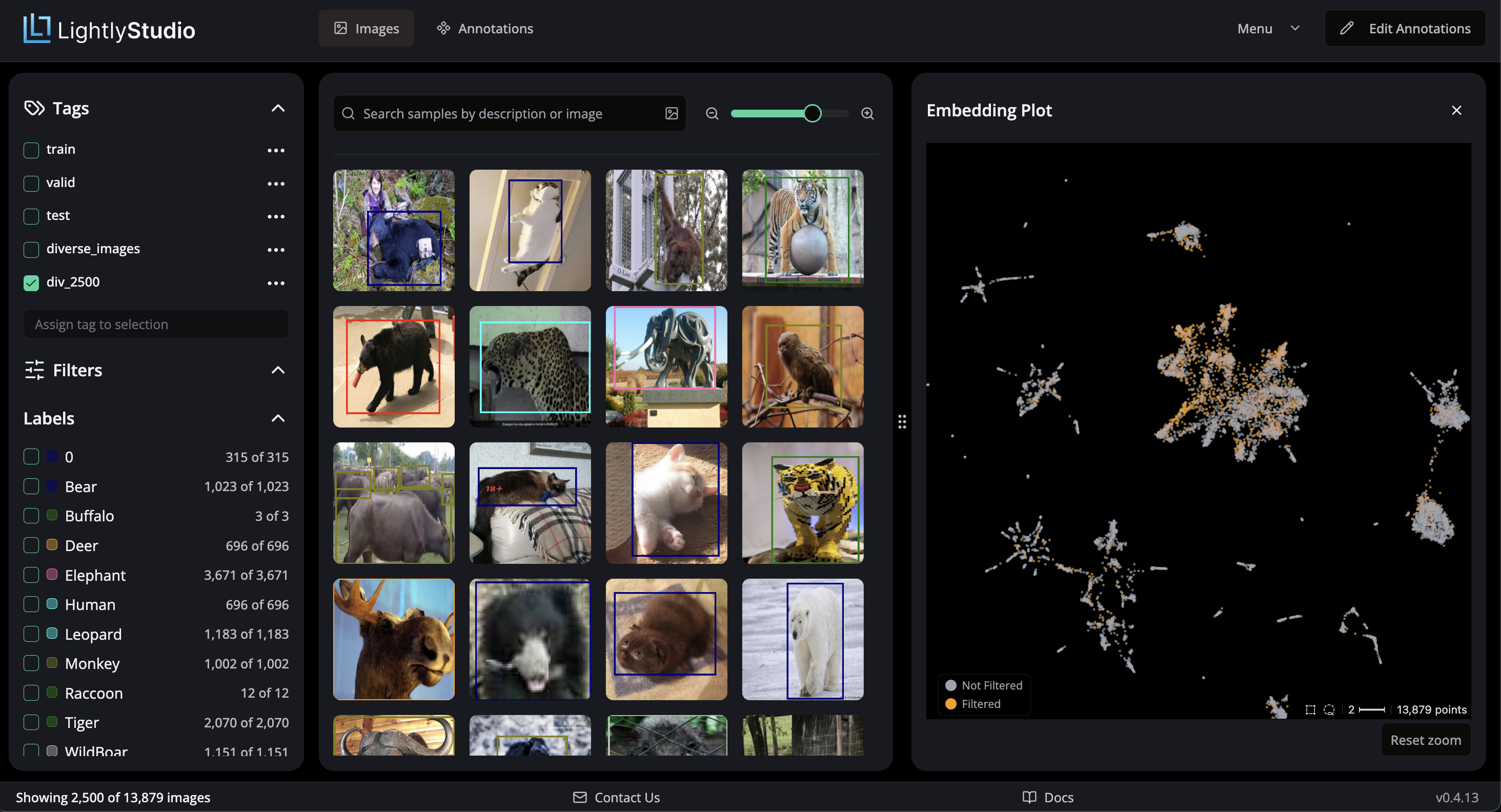

1. Lightly (LightlyStudio + LightlyTrain)

Lightly is an AI data curation company spun out of ETH Zurich. It treats curation and pretraining as one problem: Lightly AI uses self-supervised learning to identify valuable data clusters across unlabeled datasets and create training-ready samples while cutting labeling costs.

LightlyStudio is the open-source core — a unified AI platform for labeling, curation, QA, and dataset management. Built in Rust for speed, it handles COCO or ImageNet datasets on a laptop using embeddings, diversity sampling, metadata filtering, and active learning features. LightlyTrain pretrains DINOv2/v3 vision foundation models on your unlabeled AI data, then fine-tunes YOLO, RT-DETR, or ViT AI models for detection and edge use. No other platform pretrains foundation models on your data

💡 Pro Tip: See LightlyTrain in action below.

2. RF-DETR for object detection in computer vision

2. Encord

Encord is the most direct head-to-head competitor on the enterprise end. Encord is recognized as a leading alternative to Voxel51, offering a unified data platform for management, curation, and annotation of high-quality datasets for AI applications. Supported modalities are broad: images, audio, text, HTML, DICOM, plus video. Encord has serious enterprise security standards (SOC2 Type II, HIPAA, GDPR). For 2026 the company leaned into 3D and physical AI data with LiDAR + RGB fusion for autonomous driving customers.

💡 Pro Tip: Looking to compare Encord against Lightly directly? Read our deep-dive: The 10 Best Encord Alternatives in 2026.

3. Roboflow

Roboflow focuses on the entire computer vision workflow, from image annotation to dataset management and deployment. This software covers data collection, data labeling, augmentation, training, and edge deployment, and is particularly popular for YOLO-based object detection. It hosts tens of thousands of public datasets via Roboflow Universe; its annotation tools ship with SAM-based model-assisted labels that automate repetitive work at speed.

💡 Pro Tip: Roboflow + Ultralytics is the most common stack we see teams switch from. If that's you, see Best Ultralytics Alternatives in 2026 for free / open licensing options.

4. SuperAnnotate

Users are exploring platforms that offer advanced annotation tools and workflows for AI training data. SuperAnnotate is known for its high-quality annotation tools and active learning capabilities for fine-tuning models. It handles images, text, audio, and LiDAR with workflow management and dataset management features. The company offers a managed workforce inside the platform for overflow volume.

Strengths: QA dashboards, role-based user management, integrated workforce, quality control, integration with training pipelines. Weakness: curation and model evaluation lighter than FiftyOne or Lightly.

Best for: organizations with high-volume data annotation needs.

💡 Pro Tip: SuperAnnotate's strength is throughput on routine labeling tasks. Pair it with a curation tool like LightlyStudio upstream to avoid labeling near-duplicates.

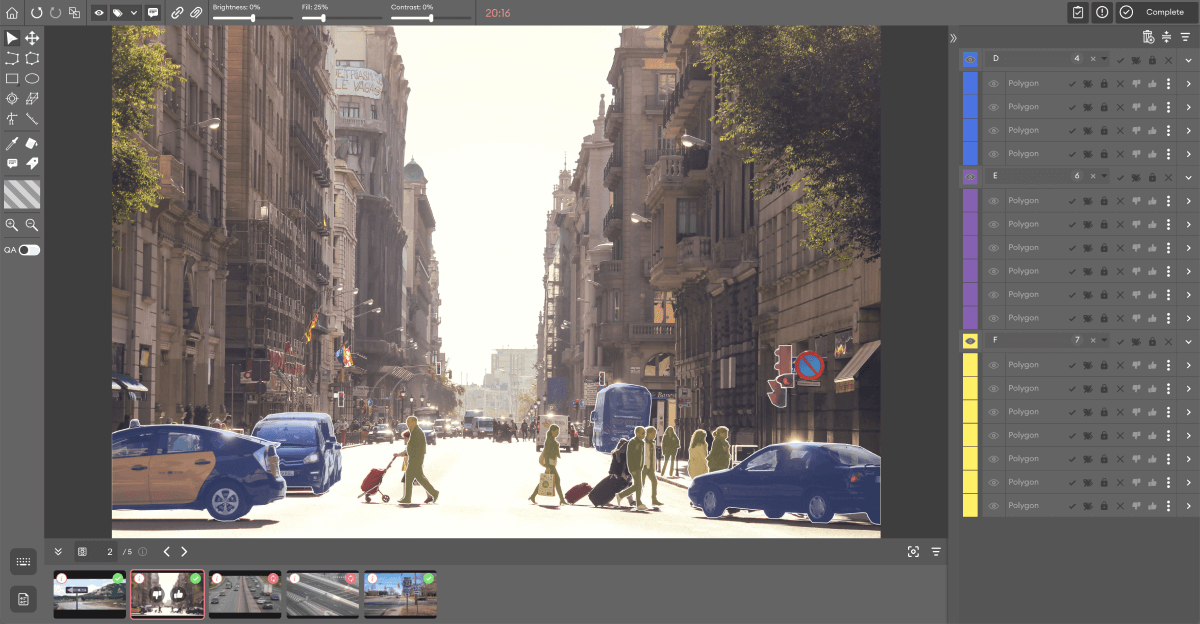

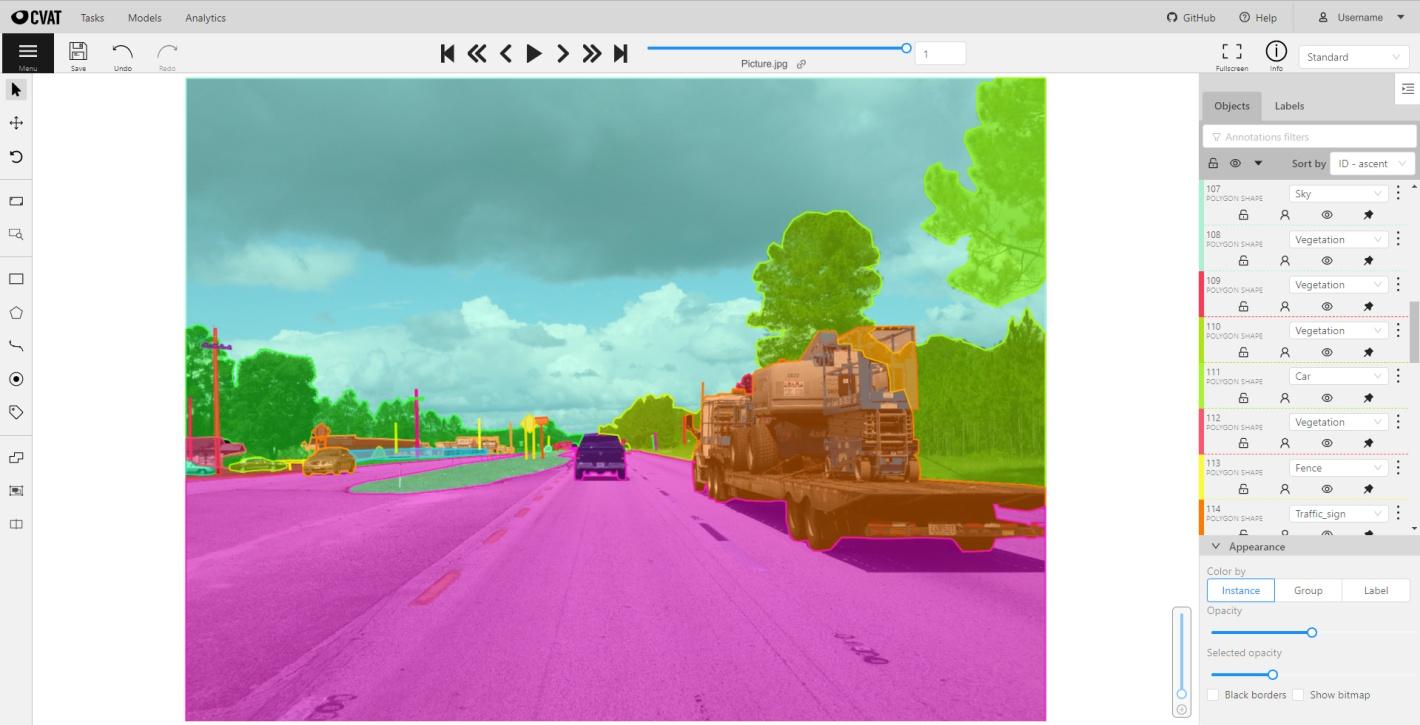

5. CVAT

CVAT (Computer Vision Annotation Tool) is the leading open-source choice for frame-by-frame video and image labeling with auto-annotation support. It handles classification, detection, tracking, pose estimation, 3D point cloud labels, and masks. CVAT plus a separate visualization layer is the open-source answer to replacing FiftyOne.

💡 Pro Tip: Looking for CVAT alternatives, or ways to extend it? Check out our Best CVAT Alternatives in 2026.

6. Label Studio

Label Studio is multimodal from the ground up: text, images, audio, time series, and structured datasets all use the same labeling framework. Community Edition is free; Enterprise adds SSO, workflow management, and support.

💡 Pro Tip: Label Studio is best when modalities span beyond CV. For pure vision workflows with smart curation built in, LightlyStudio is usually a better fit.

7. Labelbox

Labelbox is an enterprise cloud platform with quality assurance (QA) and model-assisted labeling workflows. It offers dataset versioning, active learning integration, and consensus-based QA to produce reliable ground truth labels. It supports images, text, and geospatial datasets with a mature API and SDK; customers can monitor model predictions and surface labeling errors through analytics.

💡 Pro Tip: Labelbox pricing scales aggressively with usage. If cost is a concern, evaluate LightlyStudio (open-source core) or CVAT before locking in.

8. V7

V7 is a company specializing in fast, high-quality labels for video and medical imaging datasets. V7 Darwin supports DICOM and WSI (whole-slide imaging) with AI-assisted labeling, interpolation, and object tracking tuned for complex masks. V7's Workflows compose labeling, review, and ML-assisted steps into reproducible data pipelines that automate the process.

💡 Pro Tip: V7 dominates DICOM and WSI, but its curation is thin. Many medical CV teams pair V7 for labels with FiftyOne, LightlyStudio, or Visual Layer for dataset analysis.

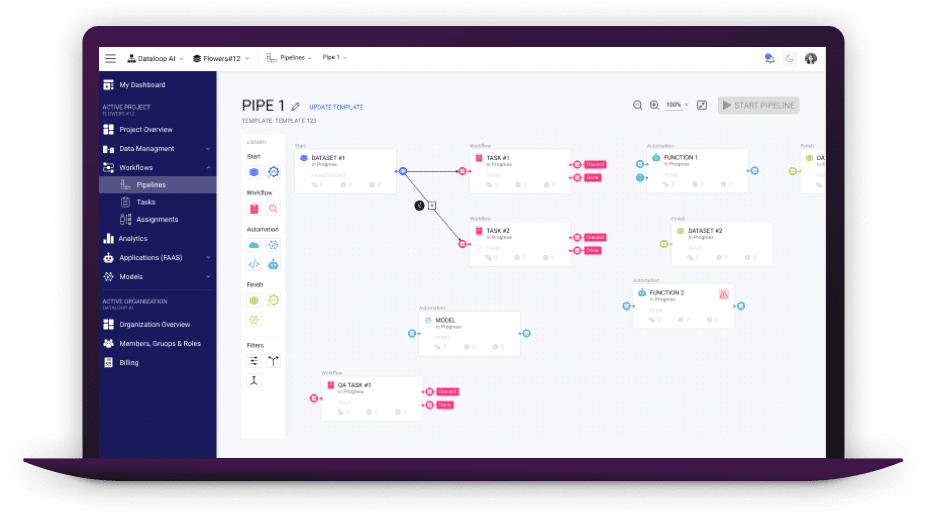

9. Dataloop

Dataloop combines data annotation across multimodal data types (images, audio, text, LiDAR), automated preprocessing, and event-driven data pipelines via a Python SDK. Teams can explore and manage datasets through a marketplace of models and workflow templates.

💡 Pro Tip: Dataloop's surface area is large — only worth the setup if you'll use most of it. Smaller teams often get more value from a focused stack (e.g., LightlyStudio + CVAT).

10. Visual Layer

Visual Layer is a production-grade tool for searching, filtering, deduplicating, and visualization of massive image and video datasets. Co-founded by the creators of fastdup, it handles smart clustering, quality analysis, semantic search, and automatic enrichment features (captions, bounding boxes, labels) using foundation models. The company offers strong security controls and on-prem setups to manage enterprise data.

💡 Pro Tip: Visual Layer doesn't label — you'll still need CVAT, Labelbox, or LightlyStudio for that. If you want curation + labeling in one tool, see how to get started with LightlyStudio in just a few minutes below.

How to choose the right alternative

Three questions resolve most decisions:

- What is your real bottleneck? If understanding datasets is the issue — duplicates, wrong labels, class imbalance — Voxel51, Lightly, and Visual Layer lead. If throughput and quality control on labels are the issue, explore Encord, SuperAnnotate, V7, Labelbox, or CVAT. If you want better AI models on less labeled data, Lightly is uniquely positioned: LightlyTrain pretrains foundation models on your unlabeled datasets.

- What are your deployment constraints? Regulated industries (healthcare, autonomous systems, defense) usually need on-prem. Lightly, Encord, CVAT, and Label Studio support self-hosted setups. V7 is commercial only; Roboflow is SaaS-first but supports self-hosted inference and edge deployment.

- Who else uses the platform? ML engineers use the SDK. Labelers use the web UI to create labels. Reviewers monitor dashboards. FiftyOne is Python-first; Encord and LightlyStudio are designed for technical and non-technical users alike.

What to look for in a FiftyOne data annotation alternative

A few things actually matter when you're shortlisting:

- Multimodal support — images, 3D point clouds, video, and metadata in one workspace.

- Native annotation vs. an external integration you have to wire up yourself.

- Scale — how the tool handles datasets with millions of samples.

- Security — SOC2 Type II, HIPAA, and GDPR if you're in a regulated industry.

- Curation depth — embedding search, duplicate detection, and tools to find labeling errors.

- Automation — model-assisted pre-labels and automated quality checks, not just manual workflows.

- Non-technical usability — can domain experts and reviewers actually use the platform without engineering help?

Final recommendations

If you just want our shortlist:

- Closest direct FiftyOne replacement with annotation and enterprise compliance: Encord is the platform to shortlist first.

- Data efficiency and model training: Lightly. Studio + Train combines curation, annotation, and self-supervised pretraining. Start with LightlyStudio or LightlyTrain — no sales call required.

- Fastest time-to-deployed-model: Roboflow.

- Open-source and self-hosted: CVAT or Label Studio.

- Segmentation-heavy or medical imaging: V7.

Whichever one you pick, benchmark it on your own data before you commit. Every vendor on this list will promise you a 10x lift. Your data is the only thing that'll tell you who actually delivers.

Stay ahead in computer vision

Get exclusive insights, tips, and updates from the Lightly.ai team.

.png)

.png)