5 Best Data Annotation Companies in 2026 [AI Training Data Services & Pricing]

Table of contents

A practical guide to the top data annotation companies in 2026: Lightly AI (computer vision tooling), Surge AI (LLM alignment and RLHF), iMerit (regulated domains like medical and autonomous vehicles), Aya Data (flexible multi-modality annotation), and Cogito Tech (multimodal with compliance documentation). Covers pricing benchmarks, how to choose the right annotation partner, why annotation remains genuinely difficult, and other providers worth knowing including Scale AI, Appen, Labelbox, and TELUS International AI.

Demand for high-quality AI training data keeps growing, and the annotation market has shifted in 2026 — foundation models now handle routine pre-labeling, pushing human expertise toward edge cases, subjective judgment, and regulated domains. Here are the five best data annotation companies in 2026 and how to choose the right one for your project.

- Top companies: Lightly AI, Surge AI, iMerit, Aya Data, and Cogito Tech — each strong in different areas: CV tooling, LLM alignment, regulated domains, flexible services, and compliance-heavy annotation respectively.

- Lightly AI: Best for CV teams cutting annotation costs through smarter data selection (LightlyStudio) and label-efficient model training (LightlyTrain with self-supervised pretraining).

- Surge AI: Best for labs aligning or evaluating frontier LLMs at scale via RLHF, preference ranking, and red-teaming — serves OpenAI, Google, Anthropic, and Microsoft.

- iMerit: Best for enterprises in healthcare, autonomous vehicles, or geospatial needing audit-ready annotation with credentialed domain specialists (e.g., board-certified pathologists).

- Aya Data: Best for mid-scale projects needing flexible, tool-agnostic annotation across CV, NLP, and agricultural AI — with GDPR, HIPAA, SOC 2, and ISO 9001 certifications.

- Cogito Tech: Best for compliance-focused organizations spanning multiple modalities (images, video, audio, text, DICOM, LiDAR) with provenance documentation via its DataSum framework.

- How to choose: Match domain expertise to your use case, verify security certifications (ISO 27001, SOC 2, HIPAA, GDPR), assess AI-assisted workflow quality, and always run a paid pilot before committing.

- Pricing benchmarks: Simple bounding boxes run $0.02–$0.09 per object; managed annotation services $6–$12/hr; expert RLHF or medical annotation $50–$100/example.

Demand for high quality training data keeps growing as organizations move artificial intelligence systems from research into production. At the same time, the economics are shifting: foundation models now handle routine pre-labeling at scale, pushing human intelligence and expertise toward edge cases, subjective tasks, and regulated domains where errors carry real consequences. Compliance and provenance requirements are also tightening across healthcare, finance, and autonomous systems.

Choosing the right ai data annotation partner — whether for natural language processing, generative ai development, or LLM model evaluation — directly affects the quality of annotated datasets used for training machine learning models and your overall compliance posture. This article profiles five leading annotation companies in 2026, covers how to choose the right annotation services provider, explains why the annotation process remains genuinely difficult, and notes other players worth knowing.

See Lightly in Action

Curate and label data, fine-tune foundation models — all in one platform.

Book a Demo

Lightly AI — Computer Vision Annotation and Model Training Tooling

Lightly AI (ETH Zurich spin-off) provides data annotation services and software tools purpose-built for computer vision projects. Its 2025–2026 product suite centers on two main tools.

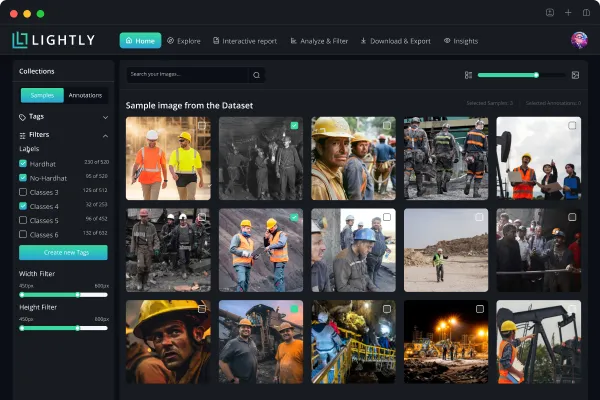

LightlyStudio (launched December 2025) is an open-source (Apache 2.0) platform for data curation and labeling of image and video data. It combines embedding-based data selection, near-duplicate detection, built-in annotation and advanced qa workflows, active learning, and a plugin system — all in one environment. The backend was rebuilt for performance using DuckDB and Rust components, and the platform ships with a Python-first SDK. It runs locally or self-hosted; a hosted cloud version is in development. LightlyStudio is designed to handle large data volumes efficiently, making it practical for teams managing millions of images or thousands of video sequences.

LightlyTrain (v0.14.0, January 2026) is a framework for self-supervised pretraining and knowledge distillation from foundation models (DINOv2, DINOv3) into production architectures including YOLO v8/v11, ViT, RT-DETR, Faster R-CNN, and ConvNeXt. It supports image classification, image segmentation, semantic segmentation, and object detection — including object tracking improvements in recent releases. No labeled data is needed for pretraining, making it well suited for ai projects in domains where labeling is expensive. LightlyTrain's autolabeling capabilities help label images automatically, reducing the volume of precise annotations required from human annotators. Licensed AGPL-3.0 with commercial licensing available.

Alongside its software, Lightly provides managed annotation services and RLHF with domain experts across 20+ fields, helping teams deliver annotations and high quality annotation outputs for model training efficiently and at scale. Published case studies include a 4x reduction in annotation overhead for drone survey company Wingtra, and a 50% reduction in curation time for industrial video provider Lythium.

Limitations: LightlyTrain requires Python and ML familiarity and a separate commercial license. Primary focus of LightlyStudio is image and video — verify audio data and text annotation support for your specific use case before committing.

Best for: CV teams wanting to cut annotation costs through smarter data selection and label-efficient model training.

Surge AI — High-Quality AI Data Annotation for Large Language Models

Founded in 2020 and bootstrapped until mid-2025, Surge AI has grown to an estimated $1.4B annual revenue run rate serving frontier AI labs including OpenAI, Google, Anthropic, and Microsoft. Its roughly one million vetted contractors handle RLHF, preference ranking, red-teaming, and safety evaluation — the structured human feedback tasks that underpin training large language models. Contractors are matched to tasks by domain expertise and earn above-average rates for the data labeling services industry, which the company credits for consistently high quality data annotation output.

In 2025, the company published two public benchmarks: AdvancedIF (instruction-following, developed with Meta Superintelligence Labs) and Hemingway-bench (writing quality, evaluated by expert human judges). These offer independently verifiable evidence of annotation quality standards — relatively rare in an industry that relies heavily on self-reported metrics. The platform also supports sentiment analysis, named entity recognition, entity recognition tasks, and structured model evaluation for ai systems across domains including law, medicine, and STEM, making it capable of more advanced tasks than simple preference labeling.

In July 2025, the company initiated its first external fundraise, reportedly targeting $1B at a $15–25B valuation. The same period brought a class-action lawsuit alleging contractor misclassification, and two internal documents were leaked covering training guidelines and contractor resource policies. Both remain unresolved and warrant due diligence from prospective customers.

Limitations: Pricing is custom-quoted only. Not suited for computer vision annotation. Contractor classification and transparency concerns are live issues.

Best for: Labs and enterprises aligning or evaluating frontier LLMs at scale.

iMerit — Accurate Data Annotation for Regulated and High-Stakes Domains

iMerit (founded 2012, San Jose) offers expert-led data annotation services, model fine-tuning, and validation across medical imaging, autonomous vehicles, and geospatial data. Its Ango Hub platform — acquired via Ango.ai in 2023 — handles image, video, audio data, text, LiDAR, and DICOM with AI-assisted pre-labeling and advanced qa workflows for computer vision systems and NLP pipelines alike. Annotation methods include bounding boxes, polygon annotation, semantic segmentation, image segmentation, and keypoint labeling to cover a wide range of computer vision tasks. The workforce of 7,000+ includes credentialed domain specialists: board-certified pathologists for medical image annotation services, and sensor fusion specialists for autonomous vehicle projects.

A 2025 Scholars program focuses on long-term retention of expert annotators for complex generative ai and LLM tasks, positioning iMerit as a quality-over-volume alternative to gig-based platforms. The company reports 91% expert annotator retention and has expanded into RLHF, red-teaming, and data collection for foundation model dataset preparation. Clients include major autonomous vehicle developers and medical device companies operating under FDA and EMA compliance requirements. For highly regulated ai projects, iMerit's human intelligence layer and structured QA make it one of the more defensible choices in the market.

Limitations: Managed service only — onboarding is slow for small or short-term projects. Pricing is not published. Ango Hub has a learning curve that typically requires dedicated annotator training.

Best for: Enterprises in healthcare, autonomous vehicles, or geospatial needing audit-ready, accurate data annotation at scale.

Aya Data — Flexible Data Labeling Services Across CV, NLP, and Agricultural AI

Aya Data (founded 2021, London) provides data labeling services and AI consulting, drawing on annotator networks primarily across Africa. Services cover image annotation, text annotation, 3D cuboids, audio annotation services including audio transcription, and LLM evaluation and RLHF. The company is tool-agnostic, selecting annotation platforms — V7, SuperAnnotate, Kognic, and others — based on project needs rather than a fixed stack. This flexibility is a genuine differentiator for teams that have already invested in a particular tooling ecosystem. Aya Data holds certifications including GDPR, HIPAA, SOC 2, and ISO 9001.

Its AyaGrow product targets agricultural ai projects, using drone imagery to monitor crop health and guide interventions. In one published case, the system helped raise germination rates from approximately 72% to 94% for an environmental reforestation client. The company has also handled annotation for autonomous vehicle LiDAR data in Kenya and fashion retail AI in Europe. Data creation, data collection, and data processing are handled end-to-end, from raw data ingestion through to model-ready labeled outputs, allowing clients to evaluate machine learning models on clean, well-structured annotated datasets.

Limitations: Smaller operation — confirm capacity before committing to high-volume projects. Published accuracy metrics are self-reported. Pricing is not listed publicly.

Best for: Mid-scale projects needing flexible, competitively priced annotation with tool-agnostic workflows, or organizations that value impact sourcing and annotator diversity.

Cogito Tech — Multimodal Data Annotation Services for AI and Machine Learning

Cogito Tech (founded 2011, Levittown, NY) covers the widest modality range of the five: images, video, audio data, text, DICOM, and LiDAR. It has appeared on the Financial Times Americas' Fastest-Growing Companies list three consecutive years (2024–2026). Its DataSum framework provides traceability and ethical sourcing documentation — increasingly relevant as AI transparency regulations tighten globally. Global Innovation Hubs place domain-specialist annotators in-country rather than routing work to generic pools, improving domain accuracy for ai projects requiring precise annotations across diverse geographies.

LLM services include RLHF, prompt engineering, fine-tuning, and red-teaming. Data scientists and machine learning engineers at clients including Medtronic, Siemens, Verizon, OpenAI, and Amazon use Cogito to deliver annotations and high quality annotation outputs for model training and model evaluation across regulated industries. Beyond NLP and vision, Cogito handles speech recognition datasets, fraud detection data labeling, and audio transcription for conversational AI — making it one of the more versatile providers for teams with diverse project needs. The company's image classification and polygon annotation capabilities are also used in e-commerce and retail AI to generate accurate predictions from visual data.

Limitations: Pricing is not public; projects are custom-quoted. The company relies on third-party annotation platforms, which limits tooling flexibility. Quality consistency across delivery hubs warrants verification through a pilot project.

Best for: Organizations needing broad multimodal data annotation with compliance documentation and data provenance built in.

Other Providers Worth Knowing

Scale AI is the largest enterprise annotation platform by revenue. In June 2025, Meta took a 49% stake and hired CEO Alexandr Wang to lead its Superintelligence Labs. Several major customers reportedly reduced Scale AI engagements over concerns about data exposure to a competitor; Scale AI disputes this. Prospective customers with competitive sensitivity toward Meta should factor the ownership situation into their evaluation.

Appen is one of the oldest providers, with 1M+ contributors across 170 countries. Strong for multilingual datasets and search evaluation, though it has faced several years of revenue decline and restructuring.

Labelbox is a data annotation and management platform for teams running workflows in-house. Supports images, video, audio data, text annotation, and point cloud. Holds GDPR, SOC 2, and ISO 27001 certifications.

TELUS International AI (which absorbed Lionbridge AI) offers managed data labeling services focused on multilingual tasks, speech recognition evaluation, and large structured AI training data programs.

How to Choose the Right Annotation Services Provider for Computer Vision and NLP

Match expertise to your project needs. Data annotation projects vary enormously in complexity. Medical imaging, legal NLP, generative ai development, and LLM model evaluation each require different annotator skills and tools. Request evidence of prior work in your specific area — not just a general capabilities list — and confirm how annotators are recruited, tested, and retained.

Evaluate AI-assisted pre-labeling critically. It reduces cost and turnaround time for training ai models, but low-confidence predictions need human review. Ask specifically how the provider handles uncertain model outputs and what their quality control process looks like for AI-assisted annotations before accepting them into your pipeline.

Verify compliance and data security posture. For sensitive data — medical records, proprietary video, financial documents used in fraud detection — confirm relevant certifications and ask about on-premises deployment and audit trail capabilities. As AI transparency regulations tighten, provenance documentation is increasingly a procurement requirement, not just a nice-to-have.

Check pipeline integration. API quality, SDK support, and export format flexibility matter as much as annotation quality for data scientists running active learning loops or iterative fine-tuning workflows across large data volumes. A provider with strong annotation but poor tooling integration can create bottlenecks that outweigh the quality benefit.

Always run a pilot. A paid pilot on a representative sample of your actual data is the most reliable way to validate annotation quality, turnaround time, and communication before committing to full-scale engagement. Most providers support this; those that don't are worth treating with caution.

Why the Annotation Process Requires Accurate Labeling Expertise

Subjectivity and edge cases. Many tasks involve genuine judgment calls — what counts as a hazardous driving scenario, how to label images with ambiguous pathology findings. Subjectivity introduces variability across annotators, and managing inter-annotator agreement requires deliberate protocol design. Edge cases are systematically underrepresented in typical annotated datasets yet are the most safety-critical samples. This is especially true in computer vision systems for autonomous vehicles and medical AI, where annotations directly determine deployment safety and accurate predictions.

Cost variation by modality. The annotation process varies significantly in cost. Semantic segmentation and polygon annotation of medical imaging or 3D point cloud data can cost 10–50x more than basic bounding boxes, due to the expertise required. Video data multiplies costs further because objects must be tracked consistently across frames. Teams dealing with large data volumes should scope these differences into budgets early rather than discovering them mid-contract.

Workforce quality and labor practices. High quality data annotation depends on retaining experienced annotators over time, not cycling through transient gig workers. The industry has faced growing scrutiny over contractor classification, pay transparency, and worker protections. These concerns are both ethical and practical: workforce instability directly affects the consistency of annotated data and the ability to reliably meet deadlines at scale.

Conclusion — Choosing the Right AI and Machine Learning Data Partner

No single provider excels across scale, generative ai support, automation, compliance, and cost simultaneously. The right choice depends on your specific data types, regulatory environment, and how annotation fits into your broader artificial intelligence pipeline. Being selective about relevant data rather than maximizing raw volume is often the most cost-effective strategy, particularly for computer vision projects or more advanced tasks that require specialist human intelligence. Run a pilot first, verify quality independently, and treat data security and provenance as first-class requirements.

Last updated: April 2026. Pricing, product features, and company details in this market change frequently. Verify current information directly with providers before making procurement decisions.

Stay ahead in computer vision

Get exclusive insights, tips, and updates from the Lightly.ai team.

.png)

.png)